Interview with Alexandra Pinto, Founder of Hoursec #25

Tackling AI’s energy consumption

Hello Human,

Welcome to Survivaltech.club’s newsletter #25!

Today, I have the pleasure to share an interview with Alexandra Pinto, founder of Hoursec. Hoursec is a Zurich-based startup tackling AI’s energy consumption.

The interview is an excellent follow-up to the latest deep dive on AI and climate. Together with Alexandra we will learn what problem Hoursec is tackling, what are their current challenges, get some advice for future founders, and more!

🤖 Introducing Hoursec

The problem that Hoursec is tackling

In our last deep dive, we saw that while AI can help tackle climate change across industries, its high energy and carbon footprint can become a problem.

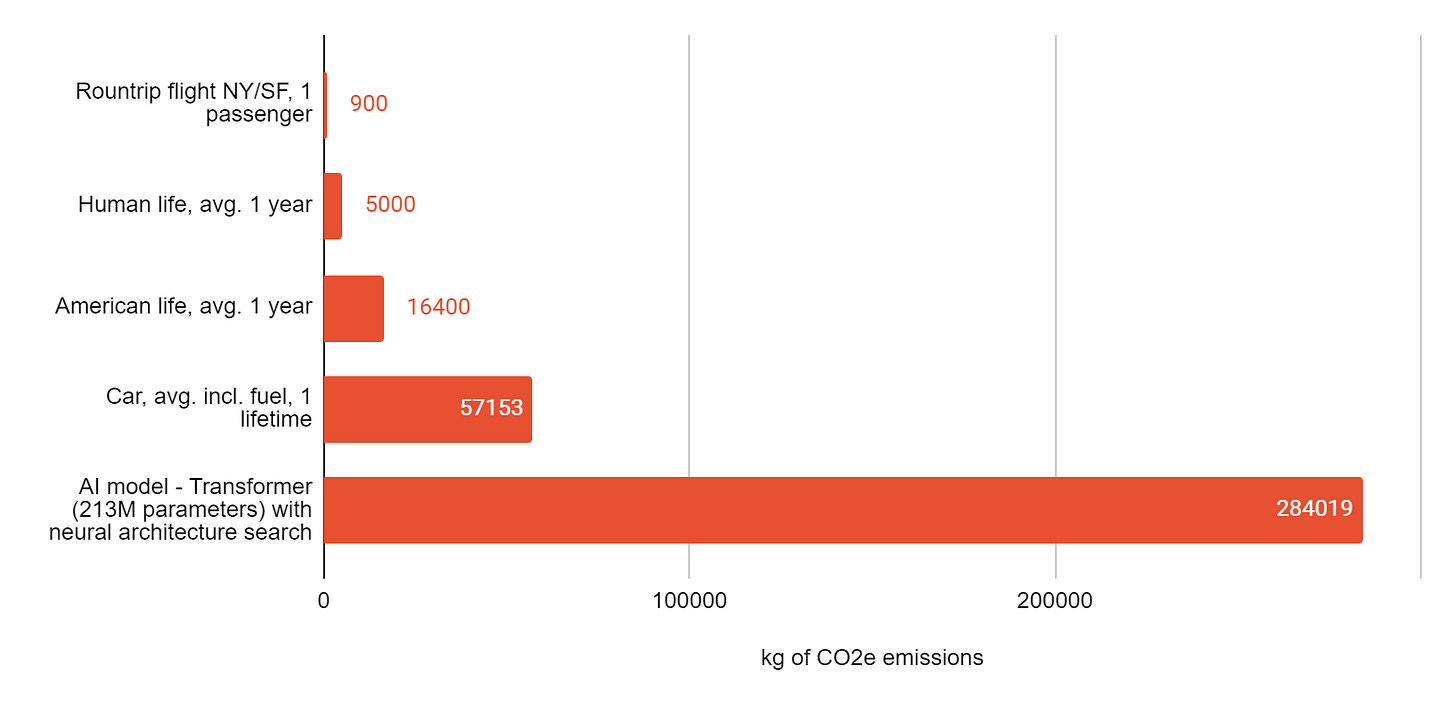

Strubell et al. (2019) proved that AI models can emit more than 284 tons of carbon dioxide equivalent. This corresponds to nearly five times the lifetime emissions of the average American car. The figure below shows how these emissions compare to other sources of CO2 emissions.

Paying attention to the choice of algorithms, location of data centers, and other algorithm parameters is critical to reduce AI’s carbon footprint. This is what Hoursec is doing, and you will shortly know more about it!

Further learning about AI’s carbon footprint:

Hoursec’s solution

Hoursec is a Zurich-based startup aiming to decrease costs and energy requirements of training and deploying AI models.

The company’s solution results from years of research, and their approach is inspired by how neurons in the human body communicate with each other (synaptic functioning). The core idea is to shift away from training AI on data centers with large energy consumption and to optimize those algorithms directly for the chips they will be deployed on.

Hoursec’s algorithms maximize the use of information in the data and, in certain use cases, reduce the energy requirements of AI workloads by a factor of 3000.

🧠Wisdom from Alexandra

What’s the founding story of Hoursec?

Hoursec’s technology results from academic research.

A few years ago, before my studies at ETH Zürich, I started working on neuroscientific research with domain experts in Analog Computing and Neurophysiology in the US. I developed methods to run equations that describe the information transmission in the brain’s synapses. Every day I focused on making these equations more accurate models of the human observations and running them faster with lighter components. We reached a certain point in the research, where the results were astonishing. The improvements in terms of energy requirements and efficiency of our algorithms were significantly better compared to state-of-the-art AI.

At this point, I started talking to several professors at ETH Zurich, coaches, and founders. They strongly encouraged me to pursue the entrepreneurial route and bring this technology out in the world. Before these discussions, I had never thought about becoming a startup founder. However, being aware of the AI’s energy consumption problem and potentially having a solution to mitigate it motivated me to start Hoursec.

You argue that your technology enables reducing the energy requirements for training AI algorithms. How does this work, and what could be the positive impact on the environment?

In machine learning, the goal is to develop algorithms to make predictions. To achieve this goal, there are two crucial steps: training and inference. The training step means training the algorithm so that it learns patterns from data. The inference step is about deploying the model on unseen data and collecting the predictions.

The inefficiencies in developing AI algorithms arise from the fact that these two steps, training and inference, are mostly done separately. Models are optimized in training mode and not in inference mode. Often, these two steps also happen on different hardware. Training is done in data centers, while inference is done on chips in many real-world applications. What happens is that after training, models must be significantly simplified to run on small chips. Hence, much of the effort and energy in optimizing the algorithm parameters goes to waste.

At Hoursec, we shift away from the paradigm of separating training and inference. We directly train our algorithms on the hardware chosen for inference. Our optimization routines ensure that the trained algorithms are well suited in size and complexity to the intended real-world application. This allows us not to waste resources in training large models in data centers and reduces the energy needs of our algorithms by several orders of magnitude.

What is the biggest challenge in getting customers to adopt your technology?

Our customers are in those industries where machine learning needs to be deployed on chips and embedded systems. For example, a car manufacturer that wants to do predictive maintenance of their assembly line’s machinery with AI is an ideal customer. There the predictive algorithms are embedded into the assembly line’s system on chips. Here, our solution massively reduces the energy needed to develop and deploy the algorithms.

Nowadays, the main problem we face to convince our target customers to adopt the technology is threefold: (1) a process problem, (2) a knowledge problem, and (3) a data quality problem.

(1) Our customers are just starting these days to deploy AI and machine learning at scale, and hence many processes on their end are not ready yet. (2) Our customers are often not AI experts, and it can be hard to explain our technology’s advantages precisely. (3) Oftentimes the data quality, but also quantity, that our customers have at hand is insufficient for successful AI projects.

Do you see AI as a source of CO2 emissions or instead as a tool that could help us transition towards a greener economy?

If we are mindful in applying AI, it can be an asset to solving some environmental problems. When saying mindful, what I mean is reflecting thoroughly on the added value of AI in a specific application before applying it and choosing the suitable algorithm complexity for the task.

Companies usually apply AI to optimize superficial variables without making long-term forecasts of the data and energy requirements that off the shelf, Open Source solutions have. Hoursec instead, is a new sustainable AI that takes in consideration these requirements and is less energy and data hungry.

What advice would you give to future or current climate tech founders?

Perhaps I can give a piece of advice specifically to people that come from academia and end up building a startup. I have been there myself, and I can say that this transition is not easy at all.

Academia and entrepreneurship are two different worlds that obey different sets of rules.

(1) Be ready to make a lot of mistakes and fail fast

Building a company is different from research because you will make many more mistakes and learning is done by failing fast. The key is being able to stand up again and learn from those mistakes to improve yourself and your company.

(2) Think about the economic value of your solution

Even if your solution is for a good cause, such as reducing carbon emission in specific fields, it must have an economic value. This is what customers and investors look out for, and it is crucial for the startup’s success. Coming from research, you may not be used to quantifying the economic value of technology, but this is something that you have to learn fast to succeed in the entrepreneurial world.

If you liked this article, share it with your network and inspire more people to learn about science-based climate solutions and startups!💚⬇️

Not a subscriber yet at Survivaltech.club?⬇️

Best, Matteo